How to create a LiteLLM Proxy API key to use in Ozeki AI Gateway

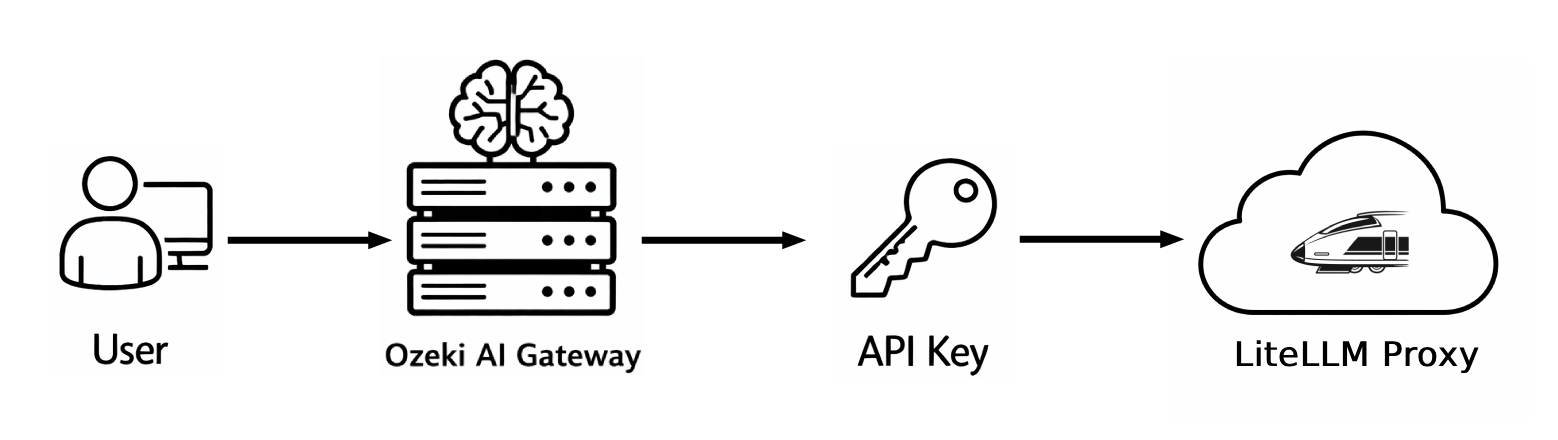

This comprehensive guide demonstrates how to create a LiteLLM Proxy API key and configure it in Ozeki AI Gateway. You'll learn how to access the LiteLLM admin panel, generate a new API key with model access, and set up LiteLLM Proxy as a provider in your gateway. By following these steps, you can connect your Ozeki AI Gateway to LiteLLM Proxy and access multiple AI models through a unified interface.

API URL: http://localhost:4000/v1 (or your LiteLLM proxy URL) Example API Key: sk-1234567890abcdef... Default model: gpt-5.2

What is a LiteLLM Proxy API key?

A LiteLLM Proxy API key is a unique authentication credential that allows applications to access multiple AI models through LiteLLM's unified proxy server. LiteLLM Proxy provides a single interface to access models from OpenAI, Anthropic, Google, and other providers. When configuring LiteLLM Proxy as a provider in Ozeki AI Gateway, you need this API key to establish the connection between your gateway and the LiteLLM Proxy instance.

Steps to follow

- Open LiteLLM Proxy

- Go to admin panel

- Login to LiteLLM

- Begin key creation

- Enter key name and choose AI models

- Create the key

- Copy API key

- Open Providers page in Ozeki AI Gateway

- Create provider

How to create a LiteLLM Proxy API key video

The following video shows how to create a LiteLLM Proxy API key and configure it in Ozeki AI Gateway step-by-step. The video covers accessing the LiteLLM admin panel, generating a new API key, and setting up LiteLLM Proxy as a provider in your gateway.

Step 0 - Install Ozeki AI Gateway

Before configuring LiteLLM Proxy as a provider, you need to have Ozeki AI Gateway installed and running on your system. If you haven't installed Ozeki AI Gateway yet, follow our Installation on Linux guide or Installation on Windows guide to complete the initial setup.

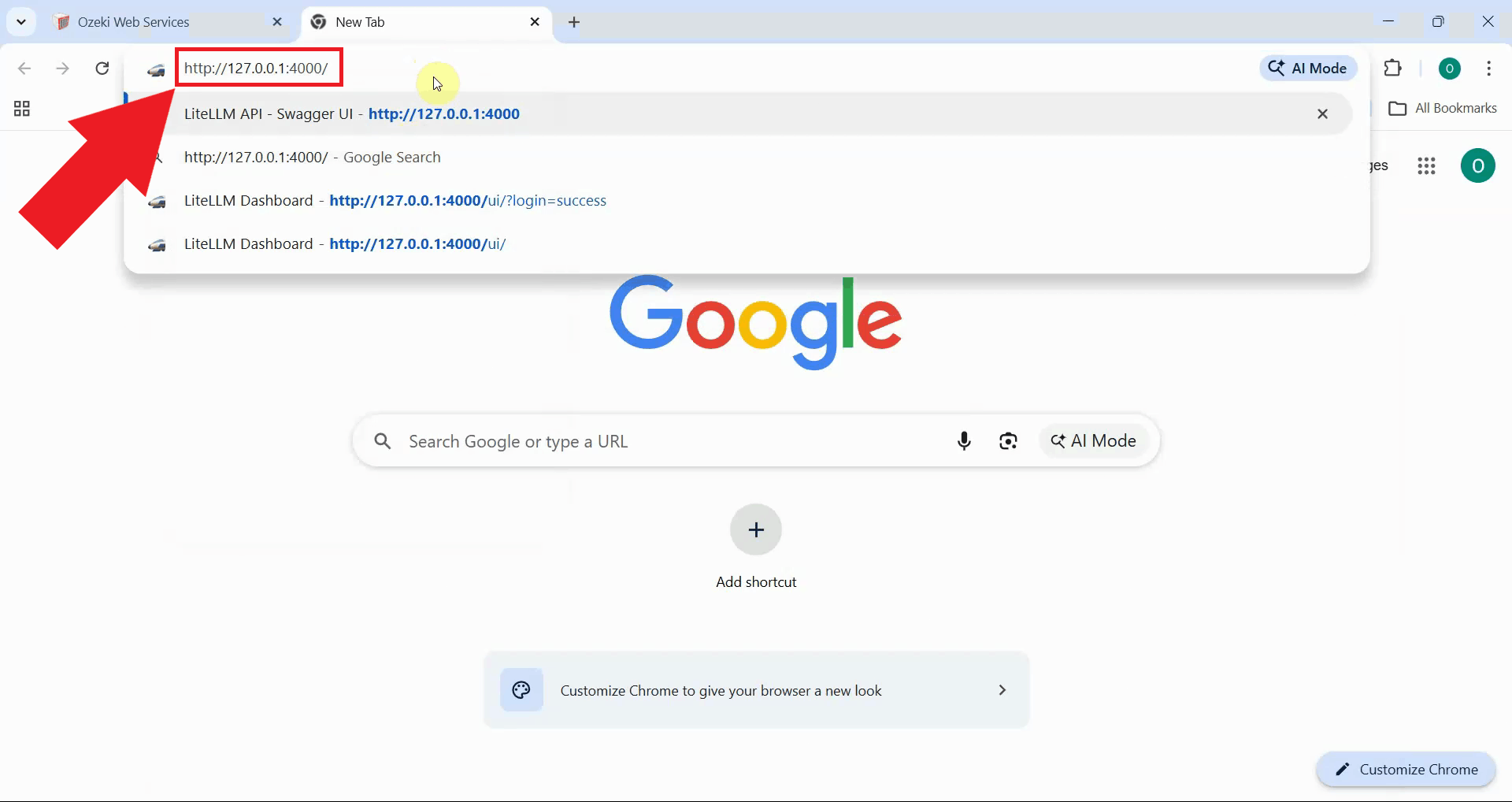

Step 1 - Open LiteLLM Proxy

Open your web browser and navigate to your LiteLLM Proxy URL, typically http://localhost:4000 (or http://127.0.0.1:4000) if running locally. The LiteLLM Proxy interface provides access to the admin panel where you can manage API keys and model configurations (Figure 1).

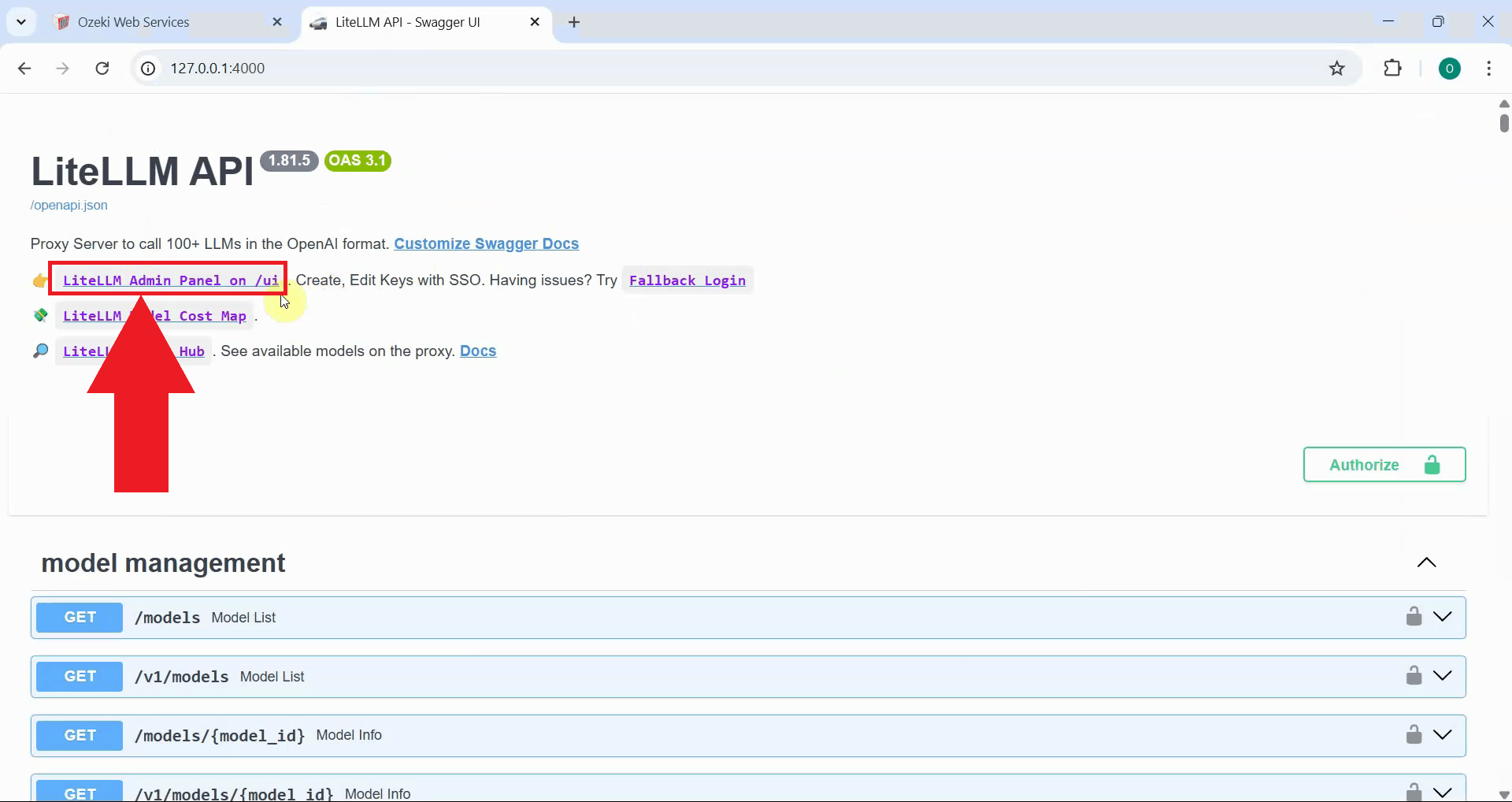

Step 2 - Go to admin panel

From the LiteLLM Proxy home page, locate and click on the link or button to access the admin panel. The admin panel provides administrative controls for managing users, API keys, and proxy configuration (Figure 2).

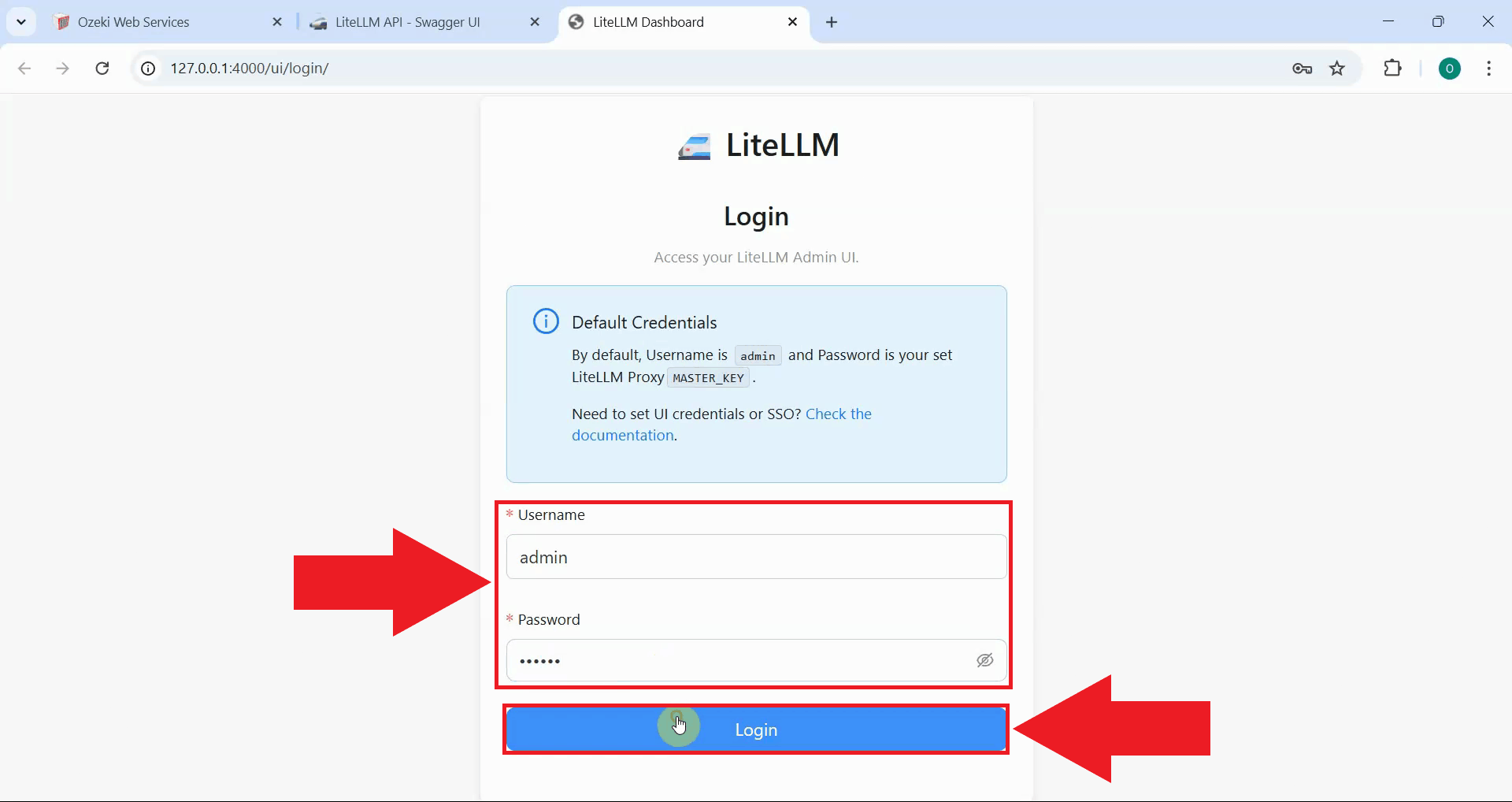

Step 3 - Login to LiteLLM

Enter your LiteLLM admin credentials to log in to the admin panel. If this is your first time accessing the admin panel, use the default credentials configured during LiteLLM setup. After logging in, you'll have access to the key management interface (Figure 3).

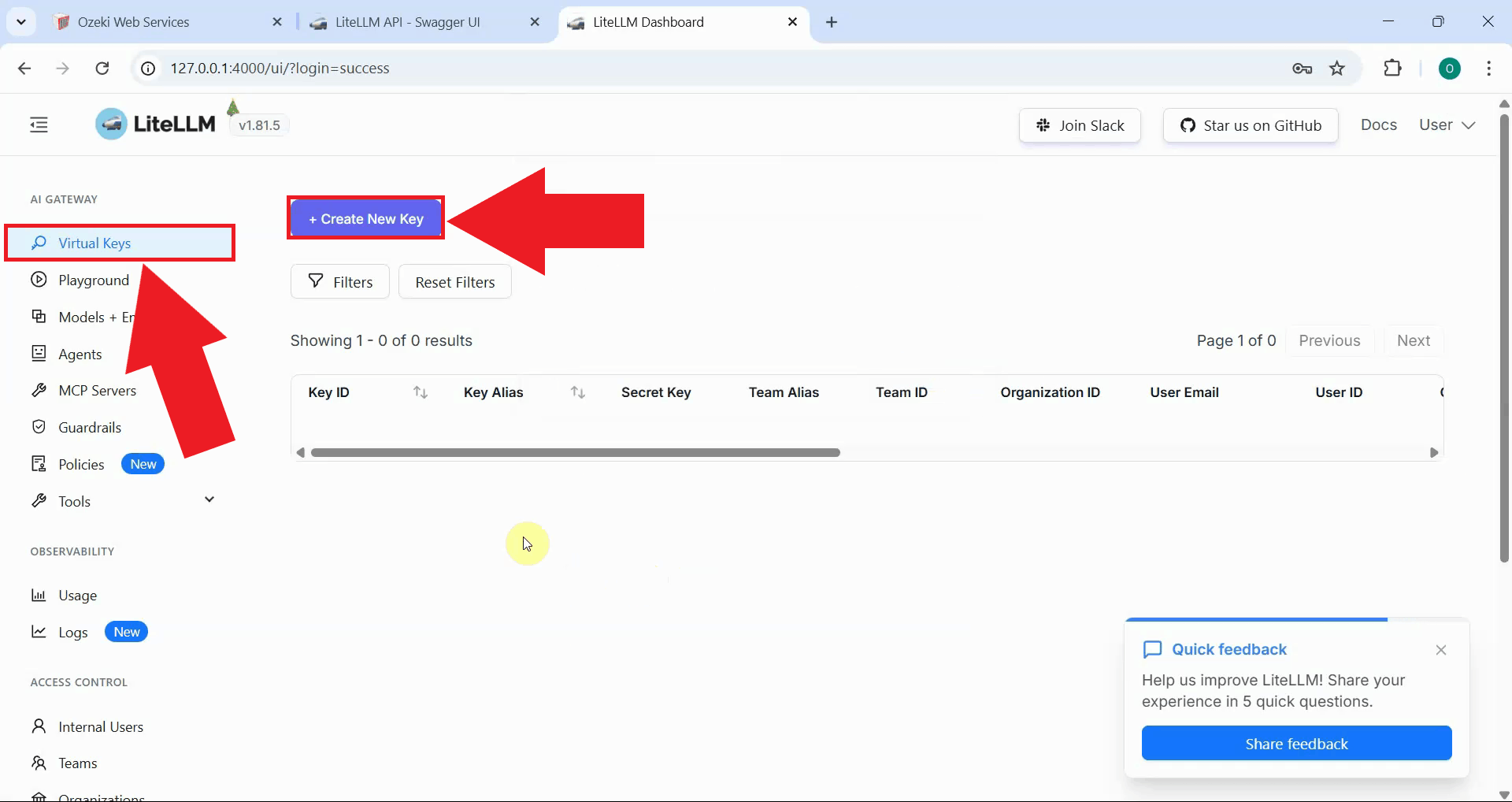

Step 4 - Begin key creation

In the admin panel, in the "Virtual keys" menu, locate and click the button to create a new API key. This initiates the key generation workflow where you'll configure the key's permissions and model access (Figure 4).

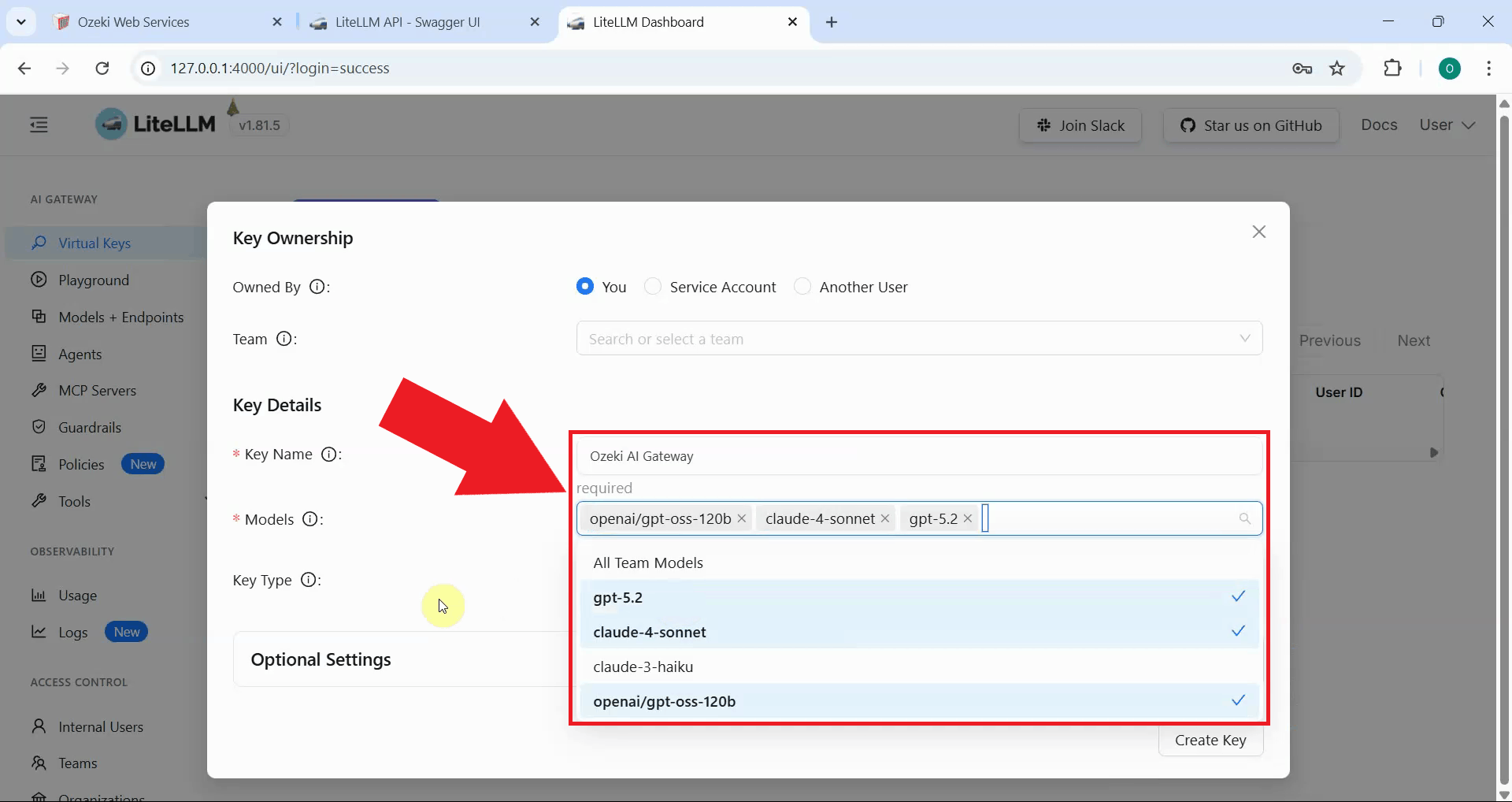

Step 5 - Enter key name and choose AI models

Enter a descriptive name for your API key, such as "Ozeki AI Gateway". Select which AI models this key should have access to from the available models configured in your LiteLLM Proxy. You can grant access to all models or restrict access to specific models based on your requirements (Figure 5).

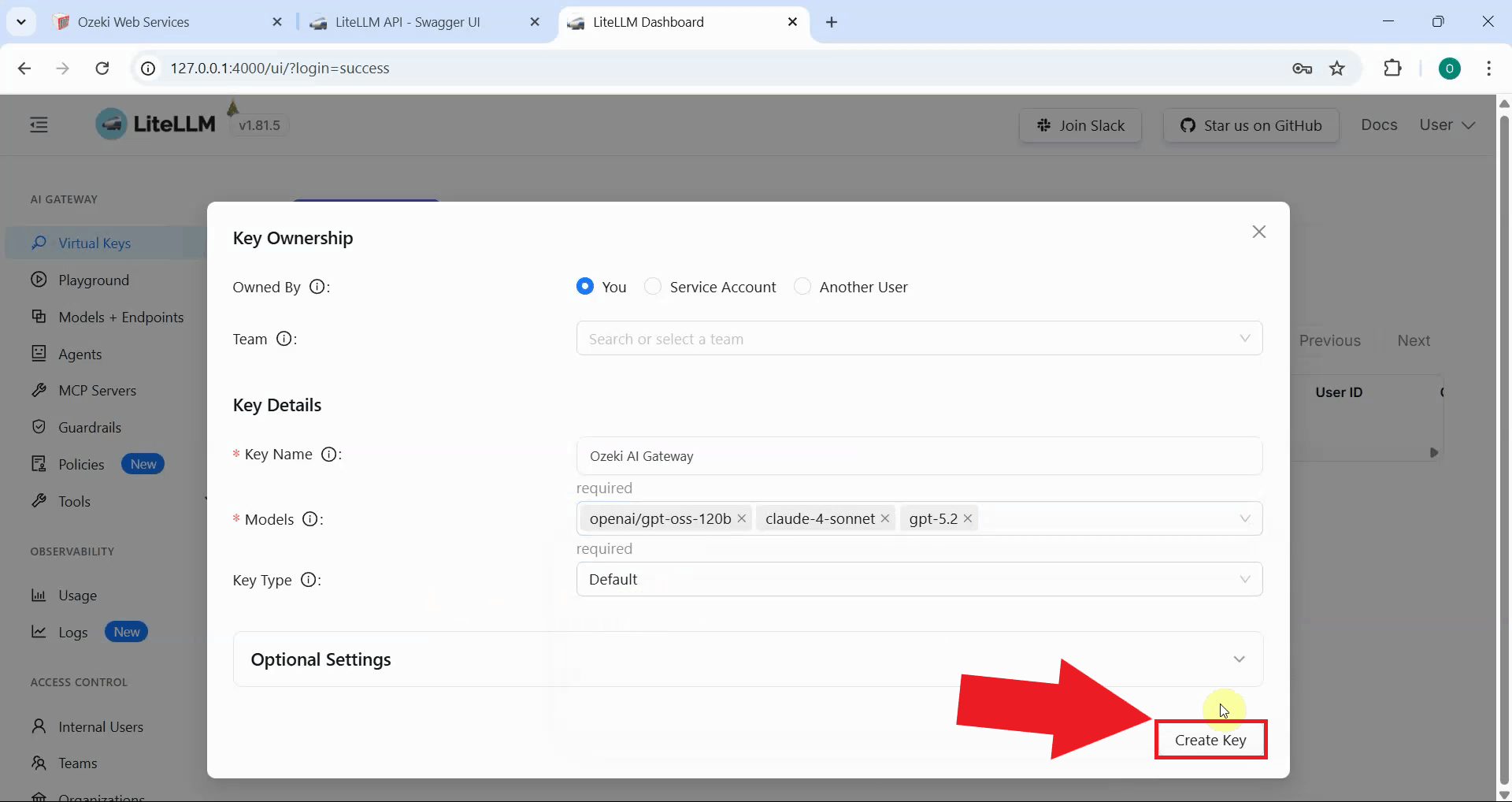

Step 6 - Create the key

After entering the key details and selecting model access permissions, click the "Create Key" button to create your new LiteLLM Proxy API key. For testing purposes, the "Default" key type is sufficient. The system will generate a unique authentication credential for accessing the proxy (Figure 6).

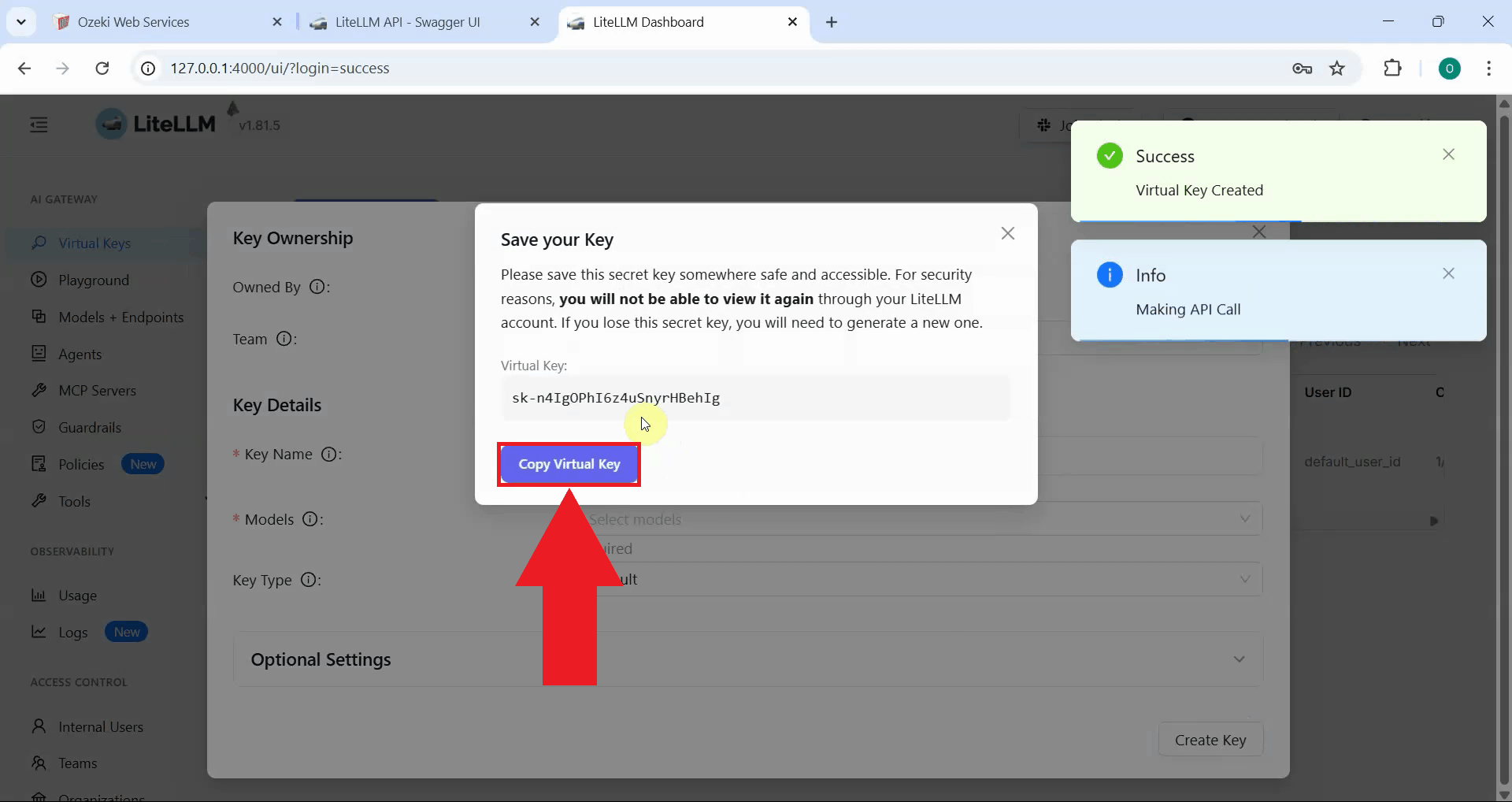

Step 7 - Copy API key

After the key is generated, LiteLLM displays the complete key value. Click the copy button to copy the key to your clipboard. Store this key securely as it provides access to your LiteLLM Proxy and the configured AI models (Figure 7).

The API key is shown only once during creation. Make sure to copy and securely store the key before closing this window.

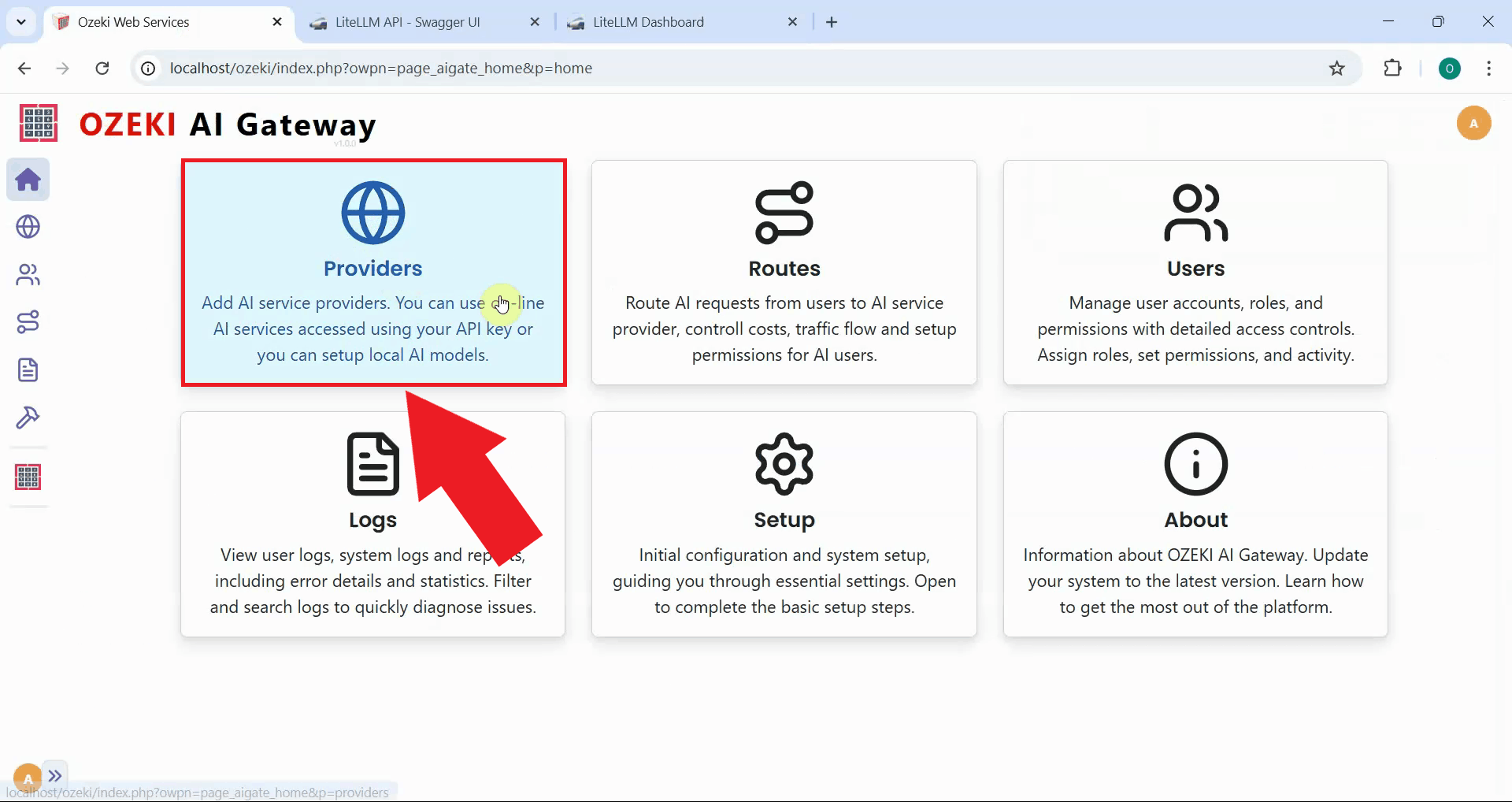

Step 8 - Open Providers page in Ozeki AI Gateway

Open the Ozeki AI Gateway web interface and navigate to the Providers page. This is where you'll configure LiteLLM Proxy as a new provider using the API key you just created (Figure 8).

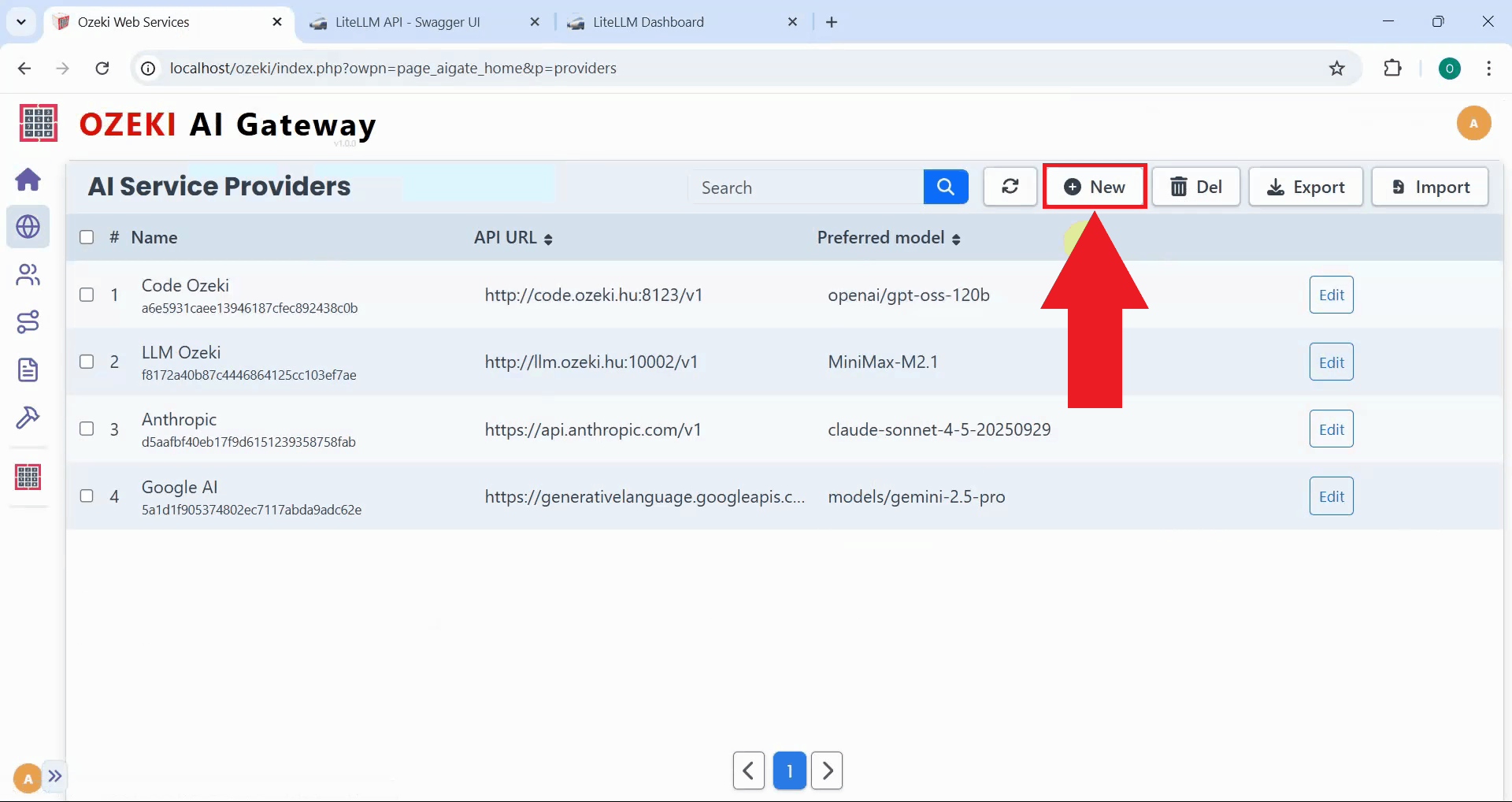

Step 9 - Create provider

Click the "New" button to begin the provider creation process. This opens a form where you'll enter the connection details for LiteLLM Proxy (Figure 9).

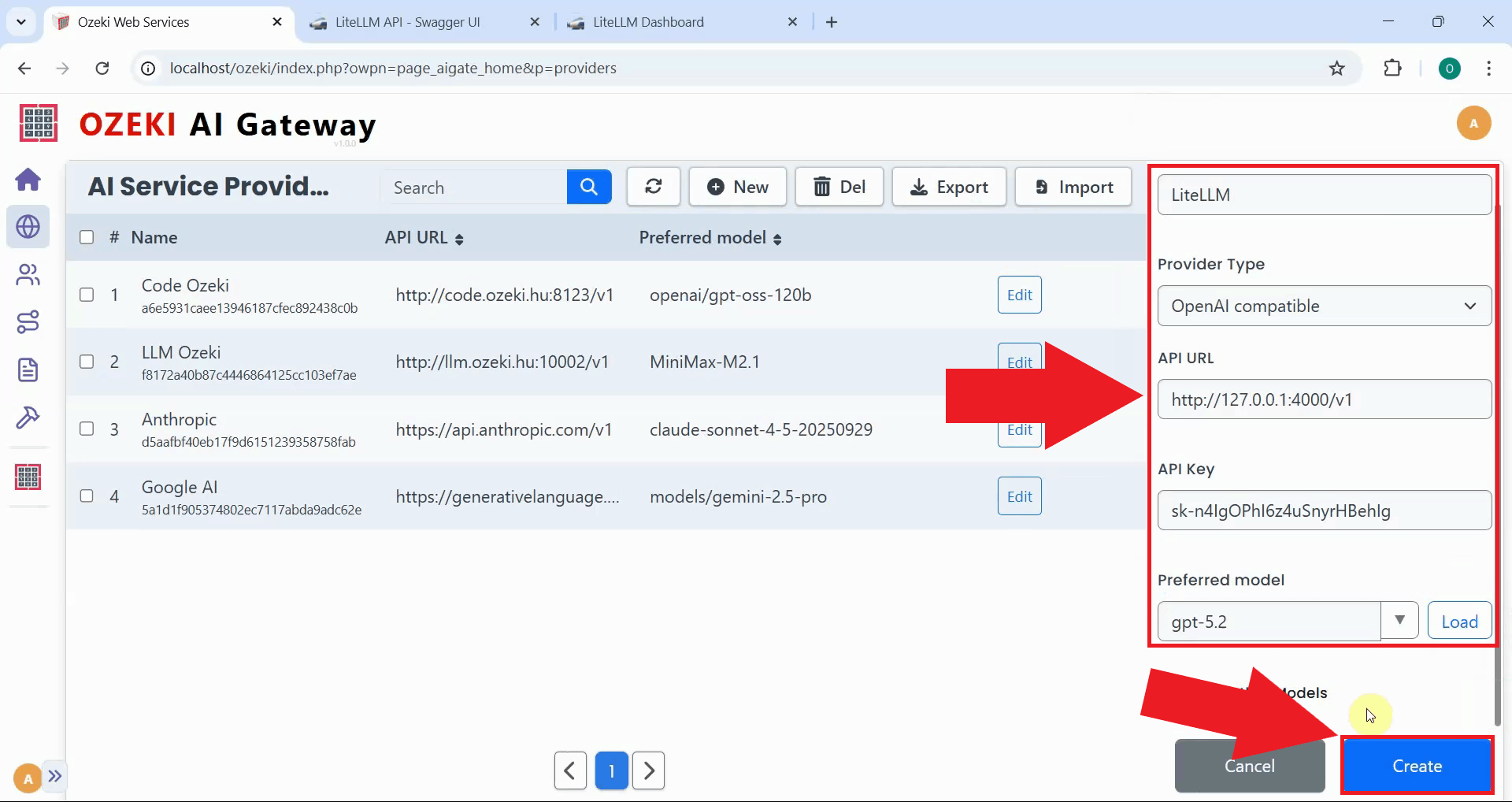

Fill in the provider configuration form with your LiteLLM Proxy details. Enter the provider name, select "OpenAI compatible" as the provider type, paste your LiteLLM Proxy URL, and paste your API key. Select your preferred model from the dropdown list, then click "Create" to save the configuration (Figure 10).

http://localhost:4000/v1

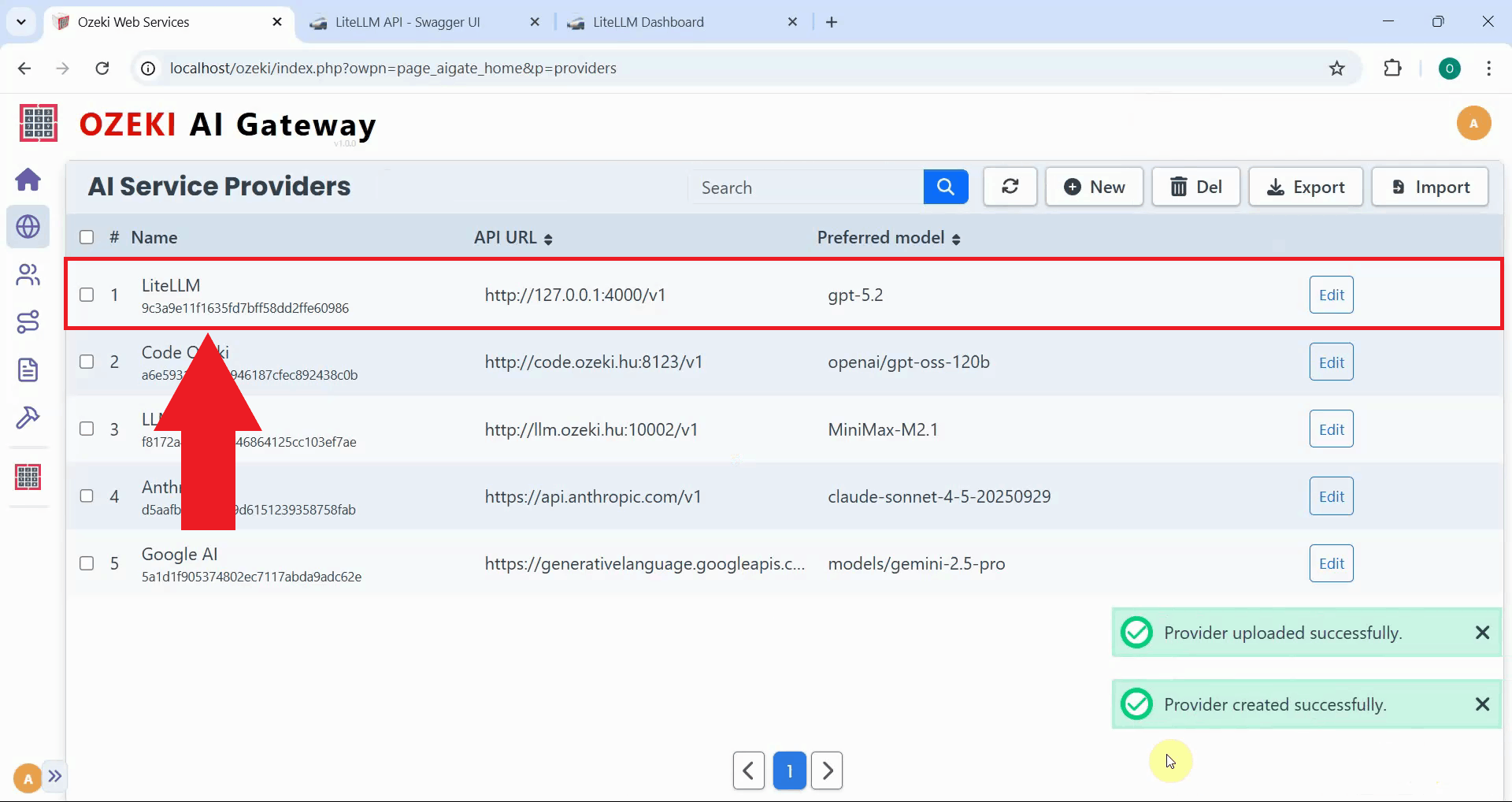

After creation, the LiteLLM Proxy provider is added to Ozeki AI Gateway. The provider now appears in the providers list, confirming that your gateway can route requests to LiteLLM Proxy. You can now create routes that allow users to access multiple AI models through your gateway (Figure 11).

Final thoughts

You have successfully created a LiteLLM Proxy API key and configured it as a provider in Ozeki AI Gateway. Your gateway can now communicate with LiteLLM Proxy and access multiple AI models from various providers through a unified interface. This setup allows you to manage LiteLLM Proxy access for multiple users and applications from a single point.